If quantum computers were such an imminent threat, why haven’t they factored any number larger than 15 yet?

It’s a very catchy critique indeed: wouldn’t we expect at least some progress? How much should we be concerned by these very expensive machines that are easily outperformed by an 8-bit Home Computer, an Abacus, and a Dog? It’s certainly funny, but the argument doesn’t hold water on closer inspection.

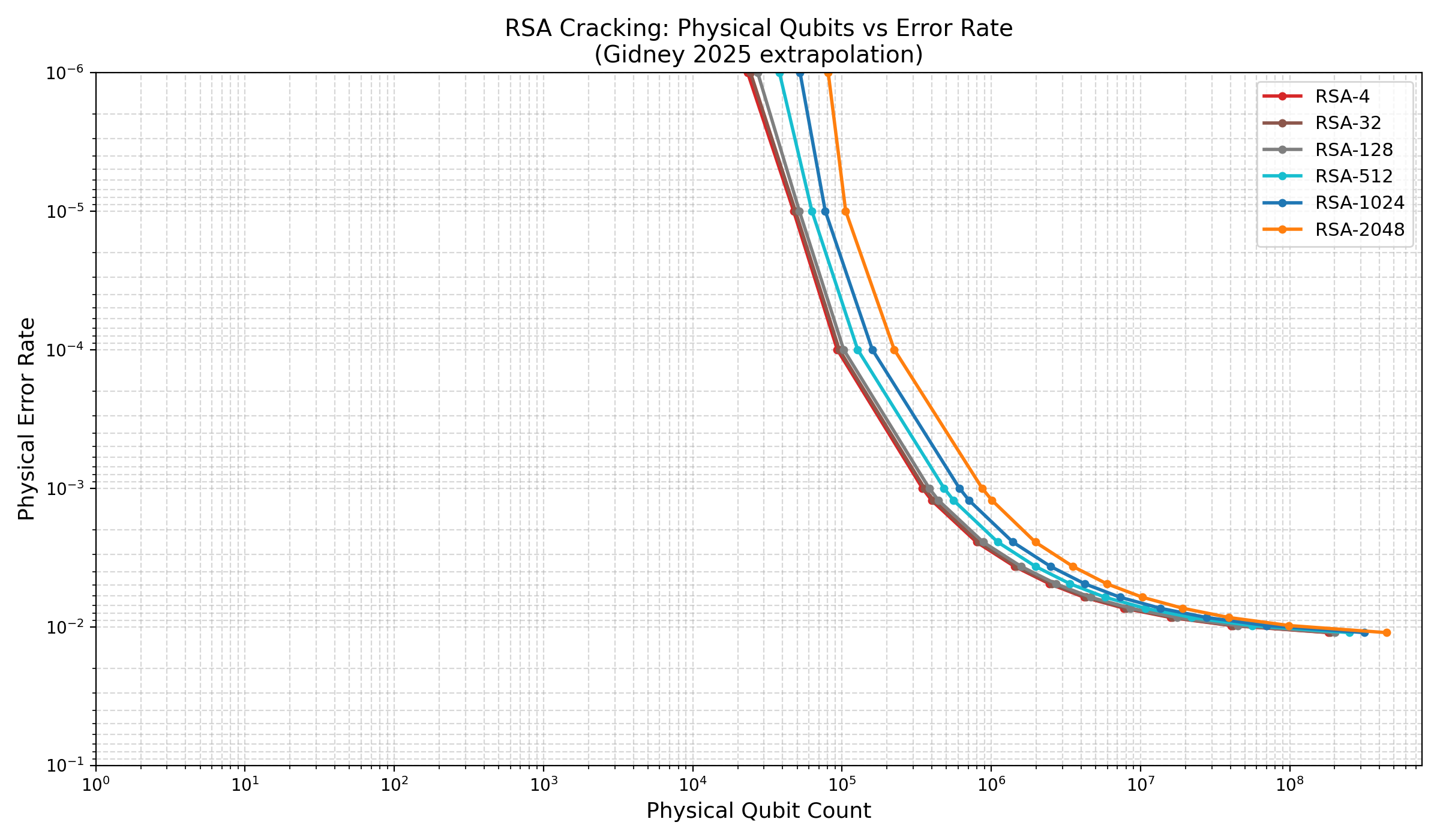

The fact of the matter is, that quantum computers require error correction, and error correction has a significant baseline overhead. Below is an extrapolation of Sam Jaques’ extrapolation (of that famous graph) of Gidney’s algorithm attacking RSA-2048 using a conservative superconducting qubit architecture.

The day a quantum computer beats a classical computer on factoring, heck the day it factors a 32-bit number, we’re uncomfortably close to Q-day already. So: don’t wait for quantum computer factoring records; you’ll be too late.

In the field, this is an entirely uncontroversial opinion, see eg:

- Craig Gidney’s Why haven’t quantum computers factored 21 yet?

- Slide 19 of Adam Zalcman’s RWPQC 2026 talk

- Sam Jaques’ PQCrypto 2025 talk

- Scott Aaronson compares it to expecting a small nuclear explosion.